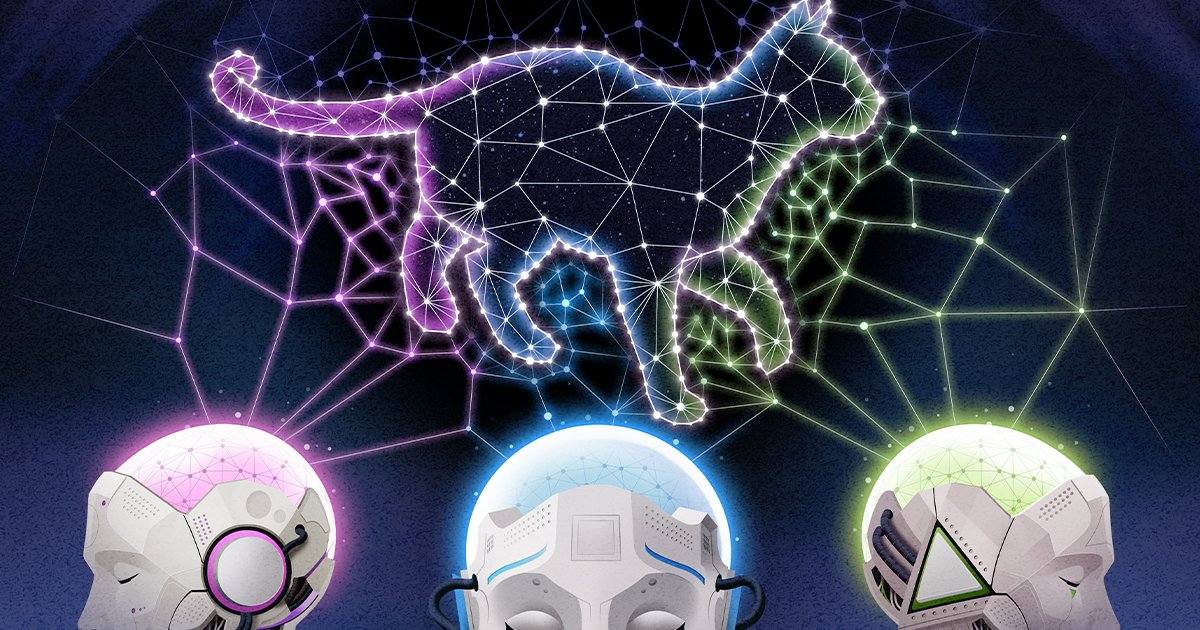

Artificial intelligence systems designed for wildly different purposes—from interpreting images to generating human language—may be discovering the same fundamental truths about how to represent the world. New research indicates that as these neural networks grow in size and capability, their internal architectures are beginning to resemble one another, converging on what some researchers describe as a shared, almost "Platonic," blueprint for reality.

The Convergence Hypothesis

This phenomenon challenges the intuitive assumption that a model built to recognize cats in photographs would organize its knowledge in a completely different way than a model trained to write poetry or translate languages. Instead, evidence is mounting that powerful AI systems, regardless of their initial training data and objectives, are finding their way to similar underlying structures for processing and storing information. This suggests there might be optimal, or at least highly efficient, ways for computational systems to model the complex patterns of our universe.

Bridging Vision and Language

The research focuses on comparing the internal workings, or "latent spaces," of models like large language models (LLMs) and computer vision networks. These latent spaces are the high-dimensional mathematical landscapes where the AI encodes its understanding. Scientists are finding that the geometric relationships and organizational principles within these spaces show striking similarities across model types. For instance, the way a vision model clusters representations of "water" might closely mirror how a language model groups words and concepts related to liquidity, flow, and transparency.

This convergence is particularly evident in the most advanced, largest-scale models. It implies that beyond a certain threshold of complexity and data, the pressure to build an efficient and generalizable model of reality may push different AI architectures toward a common solution. This is somewhat analogous to how abstract mathematics often finds unexpected applications across disparate scientific fields, revealing a universal underlying logic.

Implications for Understanding Intelligence

The findings have profound implications. If true, this convergence points toward the existence of universal computational principles for intelligence, whether artificial or biological. It suggests that there may be a limited set of highly effective ways to build a coherent model of a complex world from sensory or linguistic data. This research intersects with neuroscience, as scientists compare these AI representations to how biological brains might organize information, searching for common computational motifs.

Furthermore, this shared blueprint could accelerate AI development. Understanding a potential "best" way to structure knowledge could lead to more efficient training methods, more robust models, and better techniques for transferring learning from one domain to another. It also raises philosophical questions about the nature of the reality these models are capturing. Are they approximating an objective, mathematical structure inherent in our universe, much like the quest in theoretical physics for a unified framework?

Challenges and Future Directions

However, the research is still in its early stages. Proving true convergence, rather than superficial similarity, requires deep analysis of these multidimensional internal states. Researchers must also determine if this effect holds across an even broader array of AI systems, including those controlling physical agents like the humanoid robots that struggle with dexterous tasks. The comparison to natural systems is also compelling; does the neural circuitry in an animal's visual cortex share organizational principles with a silicon-based vision AI? The answers could blur the lines between artificial and natural intelligence.

Ultimately, this line of inquiry transforms AI from a purely engineering pursuit into a scientific tool for discovery. By observing what solutions these models independently arrive at as they scale, we are not just building better algorithms—we are potentially uncovering fundamental truths about information, representation, and the structure of reality itself. The convergence of AI models may be giving us a unique lens through which to examine the very fabric of understanding.